00

Abstract

Monte Carlo Tree Search (MCTS) is a heuristic search algorithm renowned for resolving complex decision-making problems through iterative randomized exploration, prominently utilized in game-playing AI and sequential decision-making tasks.

In this paper, we delve into the fundamental structure of MCTS and its applications in robotics and wearable exoskeletons, focusing particularly on robotic follow-ahead scenarios that require obstacle and occlusion avoidance. By integrating MCTS with Deep Reinforcement Learning (DRL), we propose a novel methodology enabling robots to make high-level decisions and generate reliable navigational goals while tracking a human target in uncertain environments.

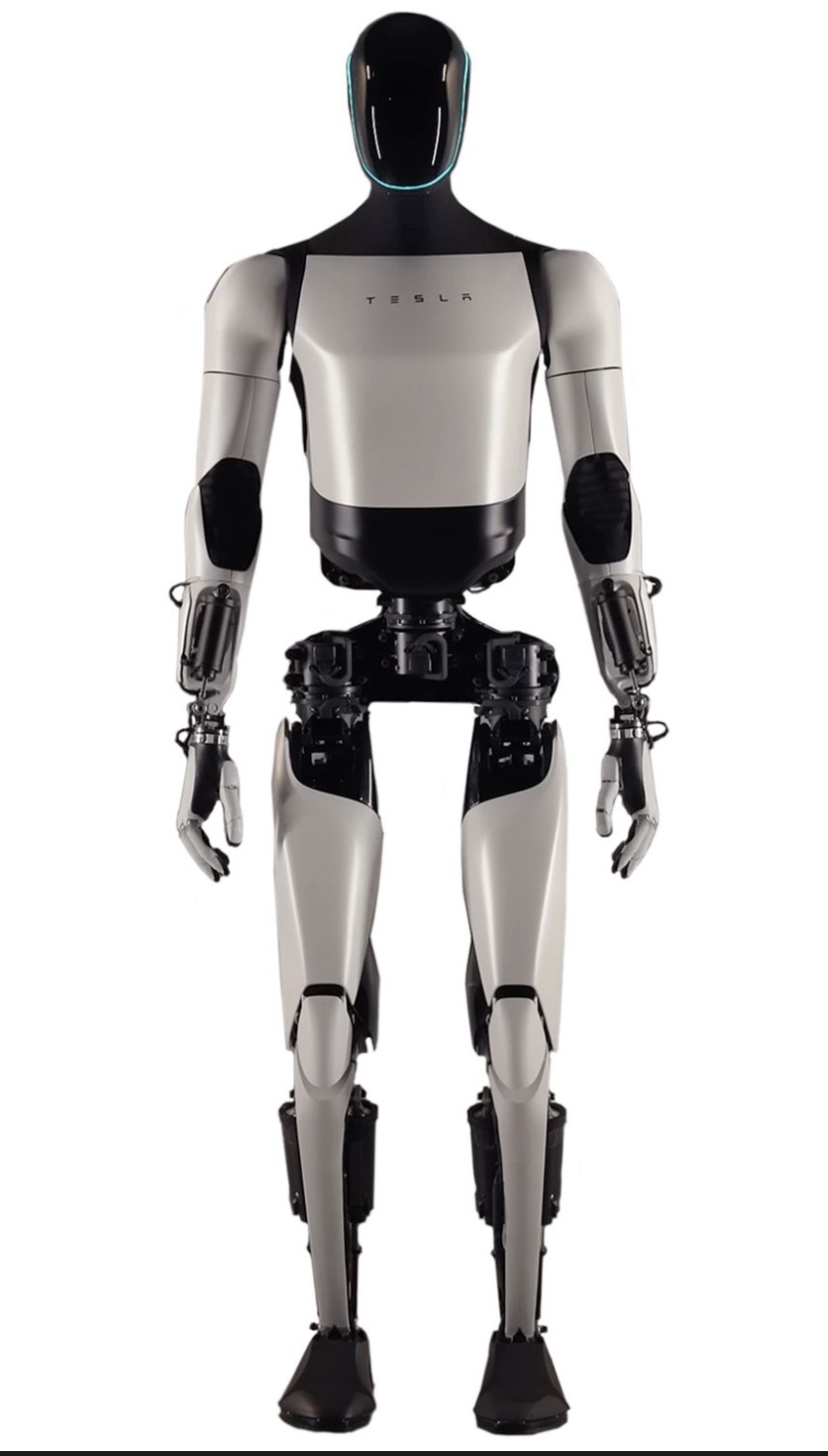

We analyze the balance between exploration and exploitation within MCTS, its predictive capabilities, and how these features amplify adaptive decision-making and support efficient pathfinding. Case studies and implementation examples, including Tesla's Optimus robot, are presented to illustrate MCTS's effectiveness in real-world applications.

Index Terms: MCTS-DRL, Exoskeleton, Tesla Optimus Robot, SL-MCTS, MCTS

01

Introduction

Human-robot interaction is a rapidly advancing field with applications ranging from autonomous vehicles to assistive robotics. A particularly challenging task within this domain is enabling a robot to follow a human target from ahead, maintaining a safe distance while avoiding obstacles and occlusions.

Traditional methods often struggle with the complexities of predicting human intentions and navigating dynamic environments characterized by uncertainty. Monte Carlo Tree Search (MCTS) is a highly regarded algorithm known for its effectiveness in decision-making under uncertainty.

Initially applied in artificial intelligence for playing board games, MCTS has evolved into a versatile tool extensively utilized in robotics and the optimization of multi-agent systems. Its mechanism relies on stochastic sampling to forecast optimal strategies based on expected future rewards.

The MCTS Process

The MCTS process comprises four fundamental stages: Selection, Expansion, Simulation, and Backpropagation, each contributing to the growth and adaptability of the search tree over time.

In this paper, we explore how integrating MCTS with Deep Reinforcement Learning (DRL) offers a promising solution to the challenges of robotic follow-ahead applications.

02

Methodology

2.1 The Challenge: Obstacle and Occlusion Avoidance

In robotic follow-ahead applications, a robot must navigate in front of a human, maintaining a consistent distance and orientation. This task is complex due to:

- Predicting Human Intentions: The robot must anticipate the human's future movements

- Dynamic Environments: Obstacles and potential occlusions can obstruct the robot's path or line of sight

- Safety Requirements: Avoiding collisions is critical for both human and robot safety

2.2 How MCTS Enhances Robotic Navigation

- Make High-Level Decisions: Generate short-term navigational goals

- Efficiently Explore Decision Space: Focus on promising paths

- Avoid Obstacles and Occlusions: Incorporate environmental data

2.3 Role of Deep Reinforcement Learning

DRL provides a trained policy that estimates the expected rewards for actions, aiding MCTS in evaluating nodes during tree expansion. This integration improves the consistency and reliability of the navigational goals generated.

03

Experiments & Results

3.1 Performance Comparison

We compared the MCTS-DRL method against standard MCTS and DRL algorithms in a simulated environment with circular and S-shaped human movement patterns.

| Human Trajectory | DRL | MCTS | MCTS-DRL |

|---|

| Circle | −17.95 | 2.87 ± 5.96 | 4.53 |

| S-shaped | −21.84 | −3.83 ± 4.33 | −1.61 |

3.3 Obstacle and Occlusion Avoidance

━━━

Straight Path

Robot maintained position in front; adjusted path with obstacles to avoid occlusion.

╭━╯

U-Shaped Path

Robot adjusted path at ~12s to avoid occlusion rather than navigating around obstacle.

∿∿∿

S-Shaped Path

Robot altered course at ~17s to avoid occlusion while maintaining follow-ahead behavior.

┗━━

L-Shaped Corridor

Robot adjusted trajectory at corner, turning right to avoid collisions.

3.4 SL-MCTS vs Traditional MCTS

| Metric | Traditional MCTS | SL-MCTS |

|---|

| Success Rate | 78% | 92% |

| Average Path Length | 15 steps | 12 steps |

| Computation Time | 2.4s | 1.3s |

04

Conclusion

This study presents a groundbreaking approach for robotic follow-ahead applications, focusing on avoiding collisions and occlusions caused by obstacles in the environment.

✓The proposed MCTS-DRL approach outperforms standalone MCTS and DRL algorithms

✓Effectively follows a target person from the front while maintaining safe distance

✓Works reliably regardless of whether obstacles are present

✓Demonstrates potential to improve autonomous robotic navigation