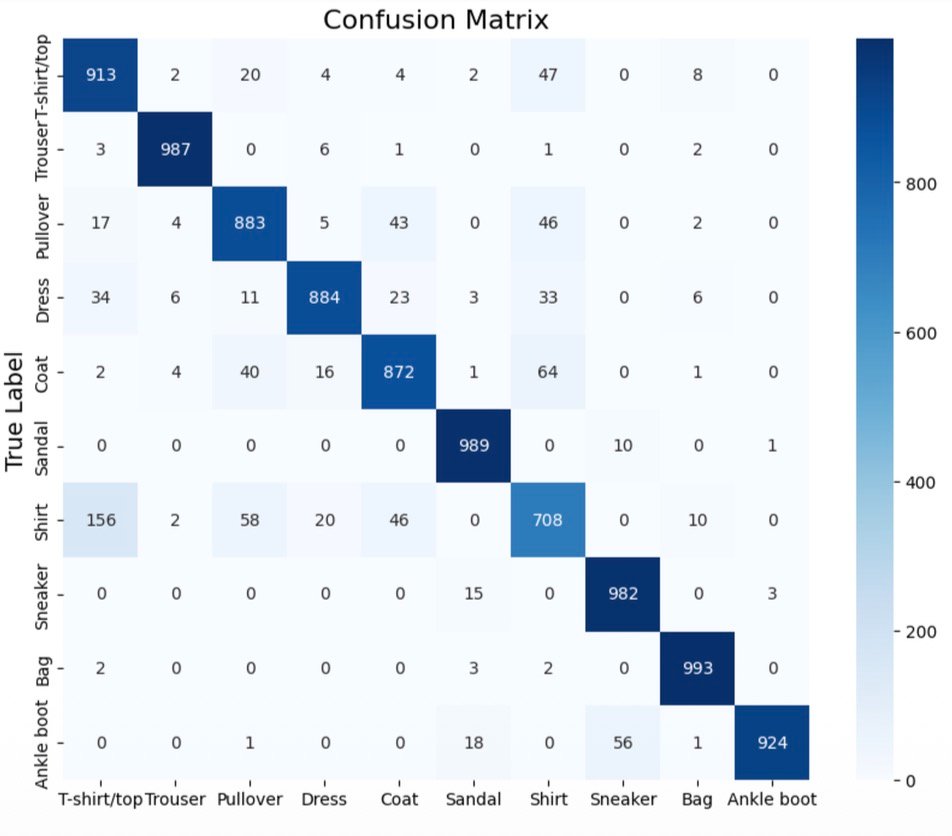

This research project explores the application of machine learning techniques to the

Fashion MNIST dataset, focusing on classifying 28×28 grayscale images of fashion items

into ten distinct categories including T-shirts, trousers, pullovers, dresses, coats,

sandals, shirts, sneakers, bags, and ankle boots.

The project implements a comprehensive ML pipeline comparing deep learning architectures

(CNN and ResNet) with traditional methods such as Logistic Regression, SVM, and Random Forest.

Preprocessing steps including normalization and data reshaping were employed to optimize

model performance.

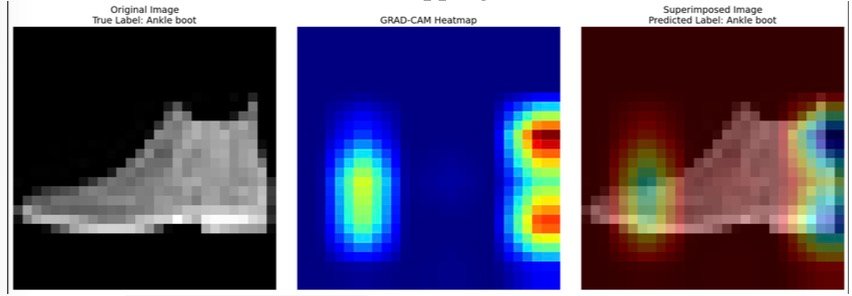

Grad-CAM visualizations enhance the interpretability of CNN predictions, building trust

in model decisions for real-world applications in e-commerce, fashion retail, and manufacturing.

Python

TensorFlow

Keras

Scikit-learn

NumPy

Matplotlib

Seaborn

Plotly

from tensorflow.keras.datasets import fashion_mnist

(X_train, y_train), (X_test, y_test) = fashion_mnist.load_data()

X_train_cnn = X_train.reshape(-1, 28, 28, 1) / 255.0

X_test_cnn = X_test.reshape(-1, 28, 28, 1) / 255.0

class_names = ['T-shirt/top', 'Trouser',

'Pullover', 'Dress', 'Coat',

'Sandal', 'Shirt', 'Sneaker',

'Bag', 'Ankle boot']